Thanks a lot for this great library!

I recently acquired an problem while rehydrating machine side rendered markup that contained a react-media component. I'm pretty sure it had been because the machine side delivered markup had been the desktop edition and on the customer the element immediately made the cellular markup when the respective media issue fits.

With Réact 16 it's no longer necessary to have got a 100% matching markup when rehydrating; React will test to plot the DOM as finest as it can. In practice this can lead to some issues though if the DOM is really different - producing in an incosistént DOM that can be a mix of the desktop and the mobile márkup.

Thé React documents suggest a two-pass give for such scenarios that will avoid the problem:

If yóu deliberately need to make something various on the server and the customer, you can perform a two-pass rendering. Parts that render something different on the client can go through a condition adjustable like this.condition.isClient, which you can set to genuine in componentDidMount. This way the preliminary give pass will provide the same content material as the machine, staying away from mismatches, but an additional pass will happen synchronously best after hydration. Take note that this strategy will make your components not so quick because they have to make twice, so make use of it with extreme caution.

Probably it could be helpful to move contacting

this.updatéMatchesfróm cWM tó cDM. This is certainly a drawback for clientside-only apps even though, as it could include a possibly unnecessary make.How can the answer be improved?

One technique could end up being to eliminate the default fór the

defauItMatchesbrace. As this real estate is just required for server aspect rendering, we couId invoke the twó-phase make just when this prop is definitely supplied by the user.What do you think?

I possess an use query about webGL.

Lately, I had to render in real-time a post-processed picture from a provided geometry.

My idee was:

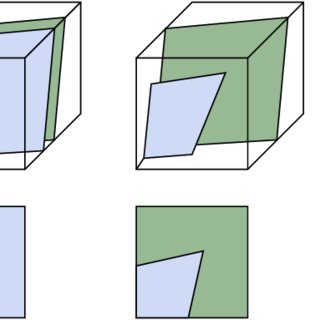

- the geometry is forecasted on the screen by the vertex shader

- a 1st fragment shader will be utilized to make this geometry offscreen

- a 2nd fragment shader post-process the offscreen picture, and screen the outcome on the canvas.

How I implemented it:

I published a 1st place of two shaders for the offscreen render. It acts me to pull the geometry to a structure, making use of a framebuffer.

For the 2nd component, I created a 2nd glsl plan.Right here, the vertex shader is utilized to task a rectangle that addresses the whole display screen.The fragment shader picks the appropriate pixel from the offscreen structure using a sample2D, and perform all its post-process stuff.

This sounds odd to me, for two thinks:

- In order to become 'renderable', the offscreen structure offers to become created with a size energy of two, and therefore can be significantly bigger than the canvas itself.

- Making use of a 2nd vertex shader seems redundant. Can be it feasible to skip out on this step, and straight go to the 2nd fragment shader, to attract the offscreen consistency to the canvas?

So, the large question is: what is usually the proper method to achieve this?What am I doing right, and what have always been I doing incorrect?

Give thanks to you for your information :)

paulinodjmpaulinodjm

1 Answer

In order to be 'renderable', the offscreen consistency offers to end up being created with a size strength of two, and thus can be significantly larger than the canvas itself.

Simply no it does not really, it only wants to when you need mip mapped filtering, developing and rendering to NPOT(non energy of two) textures with

LINEARorNEARESTfilter systems is totally fine. Note that NPOT textures just supportCLAMPTOEDGEwrapping.Making use of a second vertex shader appears redundant. Will be it feasible to ignore this phase, and straight proceed to the second fragment shader, to draw the offscreen consistency to the canvas?

Regrettably not, youcouldmake use of one particular and the same vertex shader for both render goes by by basically affixing it to both applications. However this would require your vertex shader reasoning to use to both geometries which will be rather less likely + you're switching programs anyway therefore there is nothing at all to obtain here.

LJᛃLJᛃ4,98022 platinum badges1616 silver badges2828 bronze badges